2025 Review: How AI for Developers Evolved

AI for coding evolved from autocomplete to autonomous agents in 2025. See how GPT-5, Claude Code, Cursor AI, and other dev AI tools transformed software development—with SWE-bench scores jumping from 70% to 80%.

AI Tools 2025 Review

AI Tools 2025 Review

2026 is almost here. And if you blink, you might miss just how far AI for coding has come in the past twelve months.

I've been tracking every major release, every benchmark breakthrough, and every tool launch throughout 2025. The pace has been relentless. What started as "helpful autocomplete" has evolved into autonomous agents that can work on your codebase for hours without supervision.

Let me walk you through the highlights. By the end, you'll feel that mix of nostalgia and excitement—and maybe a bit of "wait, that was THIS year?"

The Models: A Race to the Top

Top 2025 AI models for coding

Top 2025 AI models for coding

2025 was the year AI models got seriously good at coding. Here's what each major player shipped:

OpenAI

- GPT-4.1 (April) — 1 million token context window. Finally, your entire codebase fits in one prompt.

- GPT-5 (August) — 74.9% on SWE-bench Verified. The model that made "vibe coding" actually viable for production.

- GPT-5.1 (November) — Native

apply_patchandshelltools. Built for agents, not just chat. - GPT-5.2 (December) — State-of-the-art on SWE-Bench Pro. The most capable coding model to date.

Anthropic

- Claude 3.7 Sonnet (February) — First "hybrid reasoning" model. Extended thinking with up to 128K thinking tokens.

- Claude Opus 4 & Sonnet 4 (May) — Opus 4 can work autonomously for 7+ hours. Yes, hours.

- Claude Sonnet 4.5 (September) — 77.2% on SWE-bench. Can maintain focus for 30+ hours on complex tasks.

- Claude Opus 4.5 (November) — First model to break 80% on SWE-bench Verified (80.9%). A new benchmark.

- Gemini 2.0 Flash GA (February) — Native tool use with 1M token context.

- Gemini 2.5 Pro (March) — Debuted at #1 on LMArena with chain-of-thought prompting.

- Gemini 3 Pro (November) — Outperformed GPT-5 Pro on Humanity's Last Exam (41% vs 31.64%).

- Gemini 3 Flash (December) — Frontier intelligence at 1/4 the cost. 90.4% on GPQA Diamond.

xAI

- Grok 3 (February) — 1 million token context. Trained on 200,000 GPUs.

- Grok 4 (July) — First model to hit 50% on Humanity's Last Exam. Multi-agent architecture.

- Grok Code Fast 1 (August) — Specialized for agentic coding. 90%+ cache hit rates.

The Tools: From Assistants to Agents

This is where 2025 got really interesting. Dev AI tools stopped being "smart autocomplete" and became autonomous teammates. (If you're wondering which tool is right for your team, I wrote a detailed comparison of the best AI tools for developers.)

Cursor AI: The Breakout Star

Cursor AI

Cursor AI

If there's one tool that defined 2025, it's Cursor. The trajectory is insane:

January: Version 0.45 introduced .cursor/rules for repository-level AI configuration.

June: Version 1.0 launched with:

- BugBot — Automated PR review that actually catches bugs

- Background Agents — Cloud-based agents working while you sleep

- MCP one-click install — Model Context Protocol made easy

October: Version 2.0 dropped, and it was massive:

- Multi-Agents — Run up to 8 parallel agents in isolated workspaces

- Composer Model — Cursor's first frontier AI model, 4x faster than competitors

- Voice Mode — Talk to your code editor. The future is here.

December: Version 2.2 added Debug Mode and Visual Editor. Then they acquired Graphite for $290M.

The numbers tell the story: from $0 to $1B+ ARR and a $29B valuation in under two years. Over 50% of Fortune 500 companies now use Cursor.

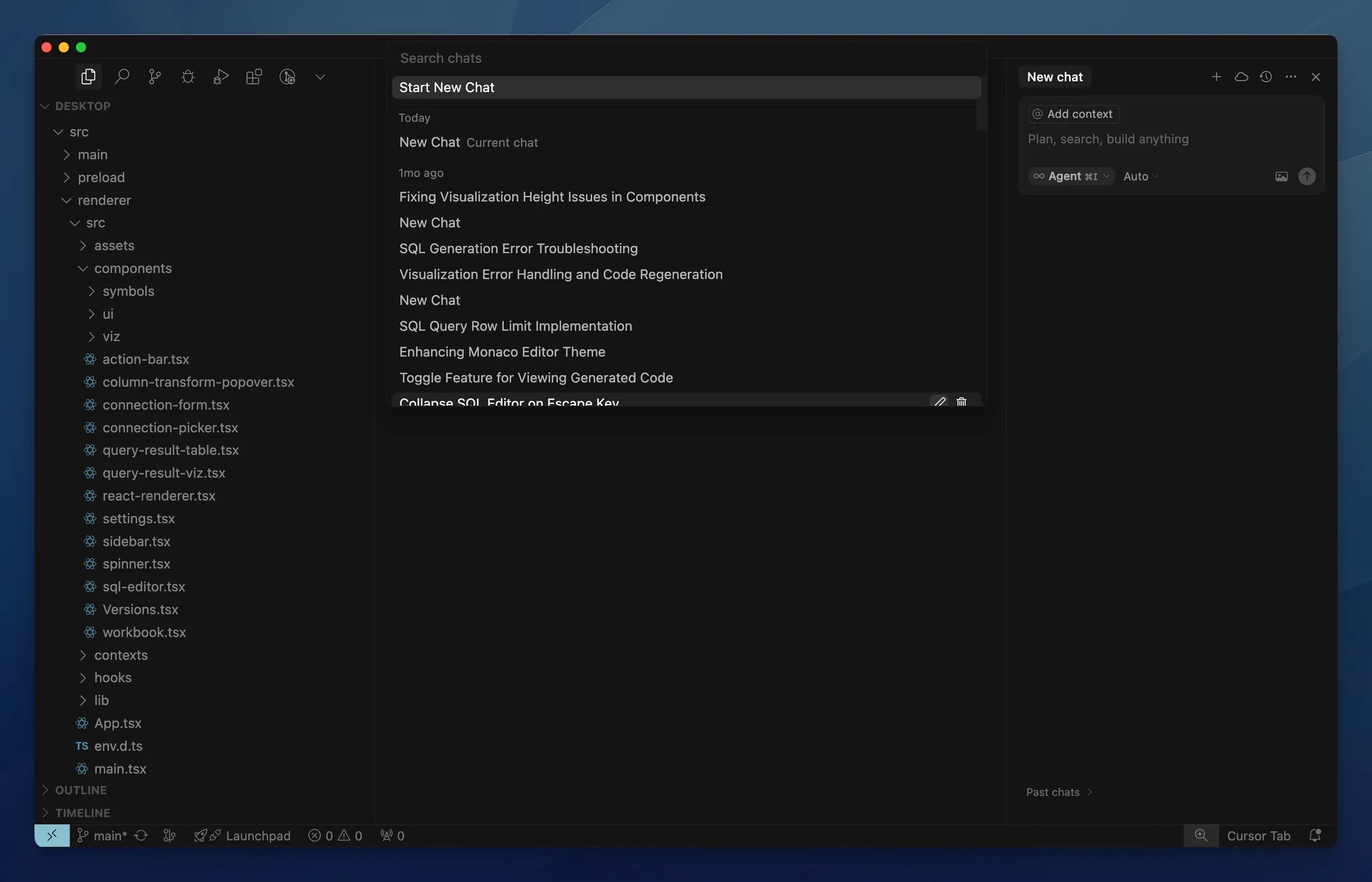

Claude Code: Anthropic's Answer

Claude Code

Claude Code

Claude Code went from research preview in February to full GA in May. The evolution:

- February: Research preview as a command-line coding agent

- May: GA with VS Code/JetBrains extensions and the Claude Code SDK

- September: Major updates including Checkpoints (instant rollback), Subagents (parallel task delegation), and Hooks (automated testing triggers)

What makes Claude Code special is the SDK—you can build custom agents on top of it. It's not just a tool; it's a platform.

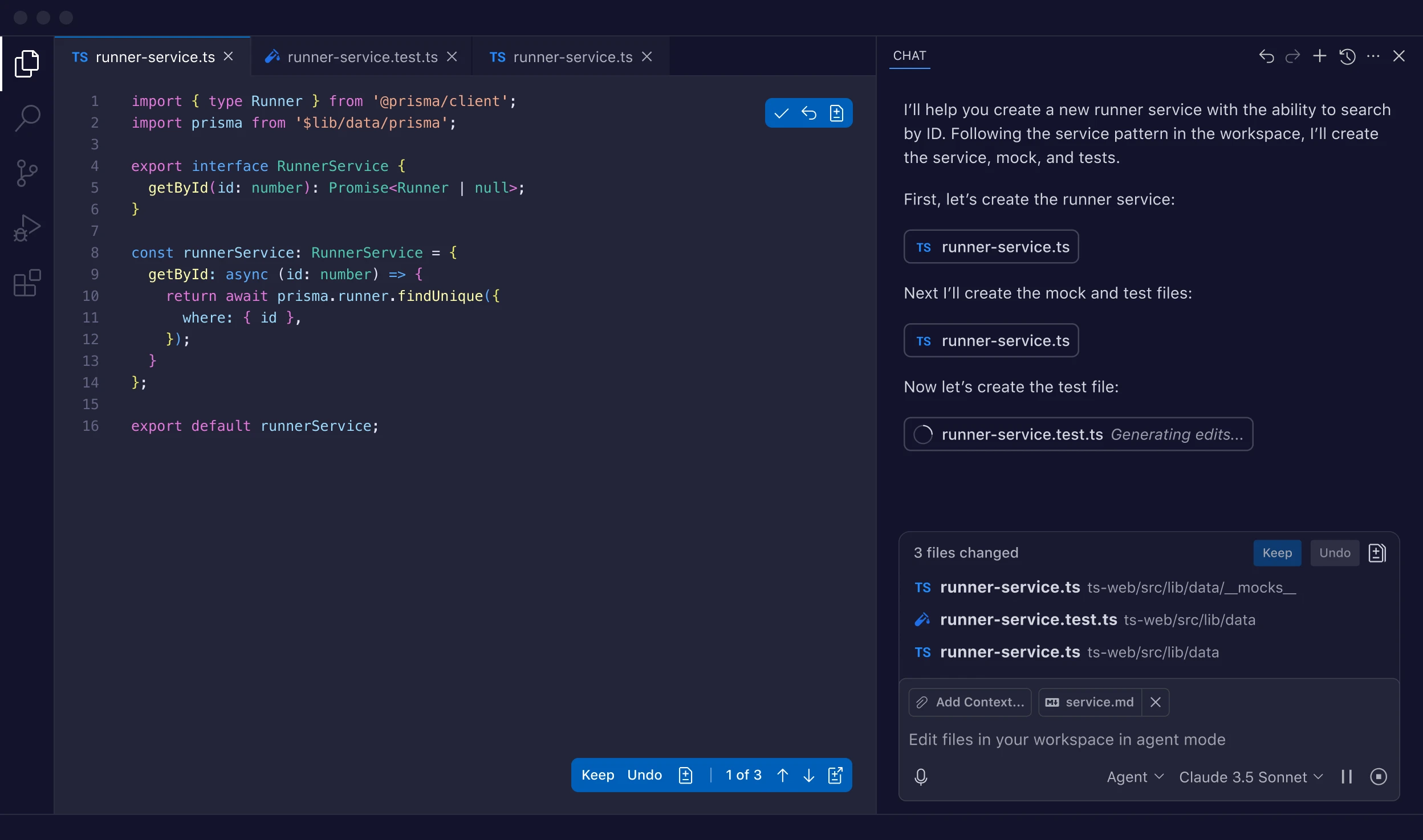

GitHub Copilot: The Incumbent Evolves

GitHub Copilot

GitHub Copilot

GitHub Copilot didn't stand still while Cursor took mindshare:

February: Agent mode preview launched. Multi-file editing. Next Edit Suggestions that predict your next move.

May: The big one—Copilot coding agent announced at Microsoft Build. Assign a GitHub issue to Copilot, and it creates a PR for you. Asynchronous, autonomous, integrated.

December: Agent Skills arrived—teach Copilot specialized tasks with custom instructions and scripts.

GitHub also open-sourced Copilot Chat under MIT license. The walls are coming down.

Google Antigravity: The New Contender

Google Antigravity

Google Antigravity

Google dropped Antigravity on November 18, alongside Gemini 3. This isn't just another IDE—it's an "agent-first" platform:

- Multi-agent orchestration — Manage multiple AI agents working in parallel

- Browser automation — Agents test and validate UI directly in Chrome

- Verifiable artifacts — Screenshots, plans, and walkthroughs as proof of work

Built by the Windsurf team Google acquired for $2.4 billion in July. Free public preview. This is Google's bet on the agentic future.

The SWE-Bench Story: Models Got Smarter

I don't think SWE-bench is perfect. But it's the closest thing we have to a standardized coding capability test. And the progression in 2025 is stunning:

| Model | SWE-bench Verified | Release |

|---|---|---|

| Claude 3.7 Sonnet | 70.3% | February 2025 |

| Claude Opus 4 | 72.5% | May 2025 |

| GPT-5 | 74.9% | August 2025 |

| Claude Sonnet 4.5 | 77.2-82.0% | September 2025 |

| Claude Opus 4.5 | 80.9% | November 2025 |

From 70% to 80%+ in nine months. That's not incremental improvement—that's a capability jump.

At the start of 2025, AI struggled with multi-file refactors. By the end, models could work autonomously for hours, fix their own errors, and validate their solutions.

What It All Means

Here's the shift that happened in 2025: AI stopped being a coding assistant and became a coding agent.

The difference matters:

- Assistants wait for your prompts and suggest completions

- Agents take tasks, plan approaches, execute code, and iterate until done

Three patterns emerged that every dev team should understand:

1. Pricing democratized. Capable models now cost $1-5 per million input tokens. AI coding assistance is economically viable at scale.

2. MCP became the standard. Anthropic donated the Model Context Protocol to the Linux Foundation in December. OpenAI, Google, and every major IDE adopted it. This is how agents connect to your tools.

3. Specialized coding models won. GPT-5.2-Codex, Grok Code Fast 1, Cursor's Composer—purpose-built models outperform general-purpose ones on development tasks.

The question for dev teams is no longer "should we use AI for coding?" It's "how do we integrate these rapidly evolving capabilities into our workflows?"

Looking Ahead to 2026

If 2025 taught us anything, it's that we consistently underestimate the pace of progress.

At the start of 2025, autonomous coding agents were experiments. By December, they're standard features in major IDEs. SWE-bench scores jumped 10+ percentage points. Cursor went from promising startup to $29B company.

What will 2026 bring? If the trajectory holds:

- Agents that can handle entire features end-to-end

- Multi-day autonomous coding sessions

- AI-native development workflows that look nothing like today's

The teams that learn to work with these tools now will have a massive advantage. The ones that wait might find themselves playing catch-up for years.

The agent era has arrived. Are you ready?

About the Author

Yaroslav Dobroskok is an AI for coding expert who has trained 900+ developers across 25+ companies on integrating AI tools into their development workflows. A GitHub Copilot beta tester and Udemy instructor, he helps development teams achieve 25-37% velocity improvements through structured AI adoption.

Connect on LinkedIn: https://www.linkedin.com/in/yardobr/